23 April 2026

Glow Bird Protocol: Syncing Audio to LED Strips on Linux

How I built a real-time audio visualizer that drives WLED LED strips using PipeWire and FFT in Python.

I have an LED strip running along the back of my desk powered by WLED, and it sat there doing mostly static color effects while I worked. It felt like wasted potential. Audio visualizers have existed since Winamp, so how hard could it be to sync the strip to music on Linux in 2025?

The answer: not that hard, but there are a few non-obvious pieces. The result is Glow Bird Protocol: a Python daemon that reads audio from PipeWire and sends real-time FFT data to WLED over UDP.

The audio capture problem on Linux

On Linux, getting raw audio data from a running application is not as simple as it sounds. PulseAudio has monitor sources, ALSA has loopback devices; there are options, but they vary by setup. I wanted something that works reliably on a modern desktop without configuration gymnastics.

PipeWire solves this cleanly. Since most distributions ship PipeWire as their audio server now (often with a PulseAudio compatibility layer), you can use parec — the PulseAudio record utility — to open a monitor stream and get audio frames directly. The key is the @DEFAULT_MONITOR@ device, which captures whatever is playing through your speakers:

cmd = [

"parec",

"-r",

"--device=@DEFAULT_MONITOR@",

f"--rate={SAMPLE_RATE}",

"--channels=1",

"--format=s16le",

f"--latency-msec={max(1, int(CHUNK_MSEC))}",

]

proc = subprocess.Popen(cmd, stdout=subprocess.PIPE, bufsize=CHUNK_BYTES)

From there, the main loop just reads chunks of raw PCM off stdout:

while True:

raw = proc.stdout.read(CHUNK_BYTES)

# process and send...

No library wrappers, no device enumeration — just a subprocess producing a byte stream.

FFT and mapping frequencies to LEDs

Once you have raw PCM frames, you need to turn them into something useful. A Fast Fourier Transform (FFT) converts the time-domain waveform into a frequency-domain representation: essentially a bar chart of how much energy exists at each frequency.

The audio comes in as signed 16-bit integers (s16le). After the FFT, the first 16 frequency bins are extracted and normalized to the 0–255 range for packing into the UDP packet:

def calculate_fft(audio_chunk):

audio_data = np.frombuffer(audio_chunk, dtype=np.int16)

raw_level = np.mean(np.abs(audio_data))

peak_level = int((np.max(np.abs(audio_data)) / 32767) * 255)

smoothed_level = int((raw_level / 32767) * 255)

fft_result = np.abs(np.fft.rfft(audio_data))

fft_normalized = np.interp(fft_result, (0, np.max(fft_result)), (0, 255))

fft_values = fft_normalized[:16].astype(np.uint8)

freq_index = np.argmax(fft_result)

fft_peak_frequency = freq_index * (SAMPLE_RATE / len(audio_data))

fft_magnitude_sum = np.sum(fft_result)

return fft_values, raw_level, smoothed_level, peak_level, fft_magnitude_sum, fft_peak_frequency

The result is 16 byte-sized frequency band values plus a few summary metrics (raw level, smoothed level, peak, magnitude sum, dominant frequency) that all get packed into each UDP frame.

Sending to WLED over UDP

Rather than using WLED's standard DRGB protocol (which expects RGB pixel values), Glow Bird Protocol sends a custom packet with the raw FFT analysis data and lets WLED's sound-reactive firmware interpret it. The packet is a fixed 30-byte struct:

def create_udp_packet(fft_values, raw_level, smoothed_level, peak_level, fft_magnitude_sum, fft_peak_frequency):

return struct.pack('<6s2B2fBB16B2B2f',

b'00002', # header (6 bytes)

0, 0, # padding (2 bytes)

float(raw_level), # raw audio level (4 bytes float)

float(smoothed_level), # smoothed level (4 bytes float)

peak_level, # peak level (1 byte)

0, # padding (1 byte)

*fft_values, # 16 FFT band values (16 bytes)

0, 0, # padding (2 bytes)

float(fft_magnitude_sum), # total FFT magnitude (4 bytes float)

float(fft_peak_frequency)) # dominant frequency (4 bytes float)

UDP means no connection overhead, which keeps latency low enough that the lights feel responsive even on a WiFi-connected ESP32.

The latency tuning problem

Getting the strip to feel in sync rather than slightly behind took more work than the FFT itself. A few things that helped:

- Smaller chunk size:

CHUNK_BYTES = 512at 48 kHz gives about 5.3 ms of audio per frame. Larger chunks add visible lag. --latency-msecon parec: requesting small fragments from PipeWire directly reduces buffering. Without this,parecmay batch up audio internally.- UDP, not TCP: WLED's HTTP interface has too much overhead for high-frequency updates. UDP is designed for exactly this use case.

The final result runs at a rate fast enough that the strip reacts to transients like drum hits and vocal attacks in a way that genuinely feels live.

Configuration

Everything is set in conf.txt — no CLI flags needed:

[WLED]

WLED_IP = 192.168.1.50

WLED_PORT = 4048

[Audio]

SAMPLE_RATE = 48000

CHUNK_BYTES = 512

gain = 1.5

Then just:

git clone https://github.com/YentlHendrickx/Glow-Bird-Protocol

cd Glow-Bird-Protocol

pip install -r requirements.txt

python main.py

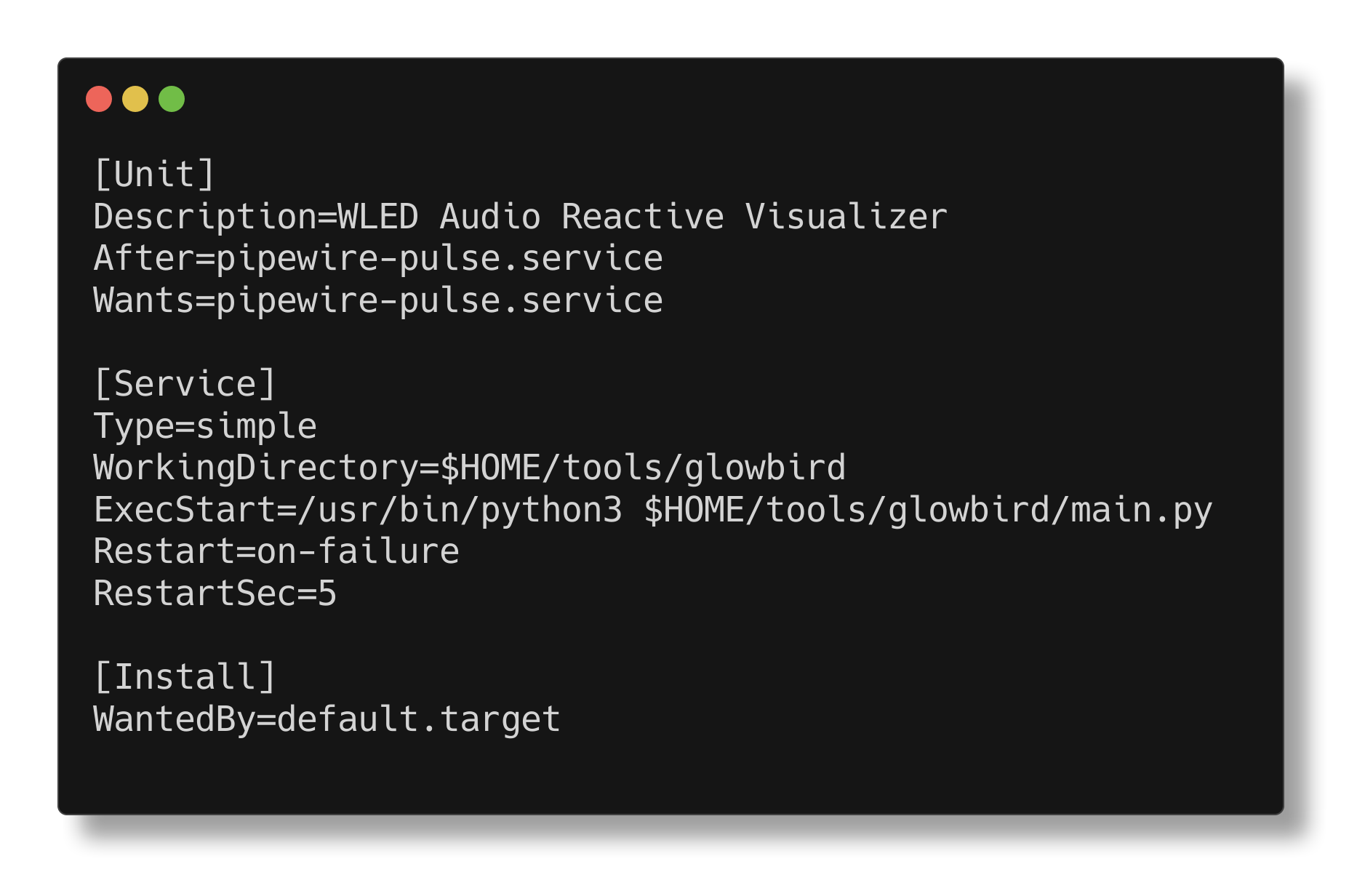

A systemd service file is included in the repo so it can run automatically in the background on login.

Source on GitHub.

// comments